ELESIG London 3rd Meeting – Evaluation By Numbers

By Mira Vogel, on 13 July 2016

The third ELESIG London event, ‘Evaluation By Numbers‘, was a two-hour event on July 7th. Building on the successful format of our last meeting, we invited two presenters on the theme of ‘proto-analytics’ – an important aspect of institutional readiness for learning analytics which empowers individuals to work with their own log data to come up with theories about what to do next. There were 15 participants with a range of experiences and interests, including artificial intelligence, ethics, stats and data visualisation, and a range of priorities including academic research, academic development, and data security, and real-time data analysis.

After a convivial round of introductions there was a talk from Michele Milner, Head of Centre for Excellence in Teaching and Learning at the University of East London, titled Empowering Staff And Students. Determined to avoid data-driven decision making, UEL’s investigations had confirmed a lack of enthusiasm and wariness on the part of most staff to work with log data. This is normal in the sector and probably attributable to a combination of inexperience and overwork. The UEL project had different strands. One was attendance monitoring feeding into a student engagement metric with more predictive power including correlation between engagement (operationalised as e.g. library and VLE access, data from the tablets students are issued) and achievement. This feeds a student retention app, along with demographic weightings. Turnitin and Panopto (lecture capture) data have so far been elusive, but UEL is persisting on the basis these gross measures do correlate.

The project gave academic departments a way to visualise retention as an overall red-amber-green rating, and simulate the expected effects of different interventions. The feedback they received from academics was broadly positive but short of enthused, and with good questions about cut-off dates, workload allocation, and nature and timing of interventions. Focus group with students revealed that there was low awareness of data collection, that students weren’t particularly keen to see the data, and that if presented with it they would prefer barcharts by date rather than comparators with other students. We discussed ethics of data collection, including the possibility of student opt-in or opt-out of opening their anonymised data set.

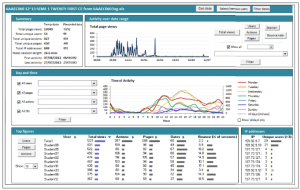

Our next speaker was Andreas Konstantinidis from Kings College London, on Utilising Moodle Logs (slides). He attributes the low numbers of educators are currently working with VLE data to limitations of logs. In Moodle’s case this is particularly to do with limited filtering, and the exclusion of some potentially important data including Book pages and links within Labels. To address this, he and his colleague Cat Grafton worked on some macros to allow individual academics to import and visualise logs downloaded from their VLE (KEATS) in a MS Excel spreadsheet.

To dodge death by data avarice they first had to consider which data to include, deciding on the following. Mean session length does not firmly correspond to anything but the fluctuations are interesting. Bounce rate indicates students are having difficulty finding what they need. Time of use, combining two or more filters, can inform plans about when to schedule events or release materials. You can also see the top and bottom 10 students engaging with Moodle, and the top and bottom resources used – this data can be an ice breaker to be able to discuss reasons and support. IP addresses, may reveal where students are gathering e.g. a certain IT room, which in turn may inform decisions about where to reach students.

Kings have made KEATS Analytics available to all (includes workbook), and you can download it from http://tinyurl.com/ELESIG-LA. It currently supports Moodle 2.6 and 2.8, with 3.X coming soon. At UCL we’re on 2.8 only for the next few weeks, so if you want to work with KEATS analytics here’s some guidance for downloading your logs now.

As Michele (quoting Eliot) said, “Hell is a place where nothing connects with nothing”. Although it is not always fit to use immediately, data abounds – so what we’re looking for now are good pedagogical questions which data can help to answer. I’ve found Anna Lea Dyckhoff’s meta-analysis of tutors’ action research questions helpful. To empower individuals and build data capabilities in an era of potentially data-driven decision-making, a good start might be to address these questions in short worksheets which take colleagues who aren’t statisticians through statistical analysis of their data. If you are good with data and its role in educational decision-making, please get in touch.

A participant pointed us to a series of podcasts from Jisc around the ethical and legal issues of learning analytics. Richard Treves has as write-up of the event and my co-organiser Leo Havemann has collected the tweets. For a report on the current state of play with learning analytics, see Sclater and colleagues’ April 2016 review of UK and International Practice. Sam Ahern mentioned there are still places on a 28th July data visualization workshop being run by the Software Sustainability Institute.

To receive communications from us, including details of our next ELESIG London meeting, please sign up to the ELESIG London group on Ning. It’s free and open to all with an interest in educational evaluation.

2 Responses to “ELESIG London 3rd Meeting – Evaluation By Numbers”

- 1

-

2

bbklt wrote on 26 July 2016:

RT @TrabiMechanic: A write up of the 3rd ELESIG London Event, Evaluation By Numbers. https://t.co/nnbKQ0mUe6 @ELESIG #elesig @leohavemann @…

Close

Close

RT @TrabiMechanic: A write up of the 3rd ELESIG London Event, Evaluation By Numbers. https://t.co/nnbKQ0mUe6 @ELESIG #elesig @leohavemann @…